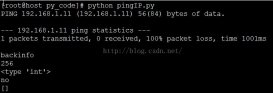

最近又新上了一部分站點,隨著站點的增多,管理復雜性也上來了,俗話說:人多了不好帶,我發現站點多了也不好管,因為這些站點里有重要的也有不重要的,重要核心的站點當然就管理的多一些,像一些萬年都不出一次問題的,慢慢就被自己都淡忘了,冷不丁那天出個問題,還的手忙腳亂的去緊急處理,所以規范的去管理這些站點是很有必要的,今天我們就做第一步,不管大站小站,先統一把監控做起來,先不說業務情況,最起碼那個站點不能訪問了,要第一時間報出來,別等著業務方給你反饋,就顯得我們不夠專業了,那接下來我們看看如果用python實現多網站的可用性監控,腳本如下:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

|

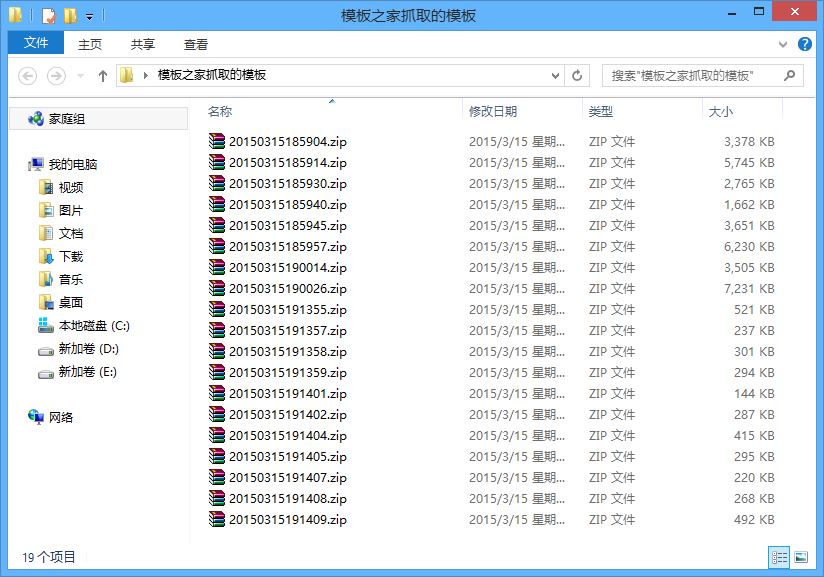

#!/usr/bin/env python import pickle, os, sys, loggingfrom httplib import HTTPConnection, socketfrom smtplib import SMTP def email_alert(message, status): server = SMTP('smtp.163.com:25') server.starttls() server.login('xxxxx', 'xxxx') server.sendmail(fromaddr, toaddrs, 'Subject: %s\r\n%s' % (status, message)) server.quit() def get_site_status(url): response = get_response(url) try: if getattr(response, 'status') == 200: return 'up' except AttributeError: pass return 'down' def get_response(url): try: conn = HTTPConnection(url) conn.request('HEAD', '/') return conn.getresponse() except socket.error: return None except: logging.error('Bad URL:', url) exit(1) def get_headers(url): response = get_response(url) try: return getattr(response, 'getheaders')() except AttributeError: return 'Headers unavailable' def compare_site_status(prev_results): def is_status_changed(url): status = get_site_status(url) friendly_status = '%s is %s' % (url, status) print friendly_status if urlin prev_resultsand prev_results[url] != status: logging.warning(status) email_alert(str(get_headers(url)), friendly_status) prev_results[url] = status return is_status_changed def is_internet_reachable(): if get_site_status('www.baidu.com') == 'down' and get_site_status('www.sohu.com') == 'down': return False return True def load_old_results(file_path): pickledata = {} if os.path.isfile(file_path): picklefile = open(file_path, 'rb') pickledata = pickle.load(picklefile) picklefile.close() return pickledata def store_results(file_path, data): output = open(file_path, 'wb') pickle.dump(data, output) output.close() def main(urls): logging.basicConfig(level=logging.WARNING, filename='checksites.log', format='%(asctime)s %(levelname)s: %(message)s', datefmt='%Y-%m-%d %H:%M:%S') pickle_file = 'data.pkl' pickledata = load_old_results(pickle_file) print pickledata if is_internet_reachable(): status_checker = compare_site_status(pickledata) map(status_checker, urls) else: logging.error('Either the world ended or we are not connected to the net.') store_results(pickle_file, pickledata) if __name__ == '__main__': main(sys.argv[1:]) |

腳本核心點解釋:

1、getattr()是python的內置函數,接收一個對象,可以根據對象屬性返回對象的值。

2、compare_site_status()函數是返回的是一個內部定義的函數。

3、map(),需要2個參數,一個是函數,一個是序列,功能就是將序列中的每個元素應用函數方法。